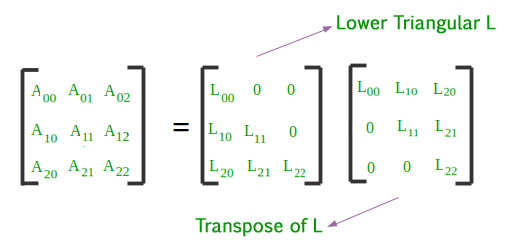

Choleski decomposition is a type of factorization of a symmetric matrix that allows a straightforward solution to linear systems. It can be used to calculate the determinant, inverse and rank of the matrix, as well as its eigenvalues and eigenvectors. The Choleski decomposition uses an algorithm that factors the same symmetric matrix in lower-triangular form, similar to Gaussian elimination. It works by using a series of row operations on a triangular matrix, resulting in the product of two lower-triangular matrices which are then combined into one.

Origination

The method was named after André-Louis Cholesky who published it in 1918, but it had been known previously by Paul Gordan (1873) under the name “matrix decomposition”. Because it only uses operations with real numbers, this method was preferable over complex number arithmetic for numerical computations before the advent of computers. By reducing the number of operations required for many computations related to linear algebra, it helps speed up training times when compared to traditional methods.

Uses of Choleski Decomposition

The Choleski decomposition is particularly useful for solving systems of linear equations where the coefficient matrix is symmetric, since it provides an efficient way to solve such problems without having to use iterative methods or numerical techniques. Furthermore, it can be applied more broadly with positive definite matrices—matrices whose coefficients satisfy certain conditions which guarantee that they remain non-singular and symmetric throughout the decomposition process. This makes it an essential tool in optimization problems where such matrices are ubiquitous.

In addition, Choleski decomposition can also be used in machine learning algorithms to reduce computational complexity; for example, it can be used when calculating covariance matrices or computing principal component analysis (PCA). Finally, due to its deterministic nature, Choleski decomposition can also provide insight into how certain problems are solved—unlike some numerical methods which often hide details from users.

Advantages and Disadvantages

One significant advantage of the Cholesky decomposition is that it is computationally efficient. The process does not require any iterative calculations or matrix inversions, and the resulting lower triangular matrix can readily be used for solving linear systems. This property makes the Cholesky decomposition a popular choice for simulating large-scale systems, where computational overheads are often a significant concern. Another advantage of the Cholesky decomposition is that it can be used for generating random numbers. By using the lower triangular matrix and a vector of independent standard normal variables, one can obtain a vector of random variables with the same correlation structure as the original positive definite matrix.

However, the Cholesky decomposition method also has some disadvantages. One significant limitation is that it is only applicable to positive definite matrices. In cases where the matrix is not positive definite, the method cannot be used to obtain a Cholesky factor. Moreover, the method also requires the matrix to be symmetric. If the matrix is not symmetric, the procedure must first be applied to the symmetric part of the matrix before any further computations can be carried out.

Summary

In summary, the Cholesky decomposition method has several advantages, such as computational efficiency and applications in generating random numbers. However, it also has some limitations, such as the requirement that the matrix is positive definite and symmetric. Despite its shortcomings, the Cholesky decomposition remains a valuable tool in linear algebra with a broad range of applications.